Scalable Agents

Agentic Applications

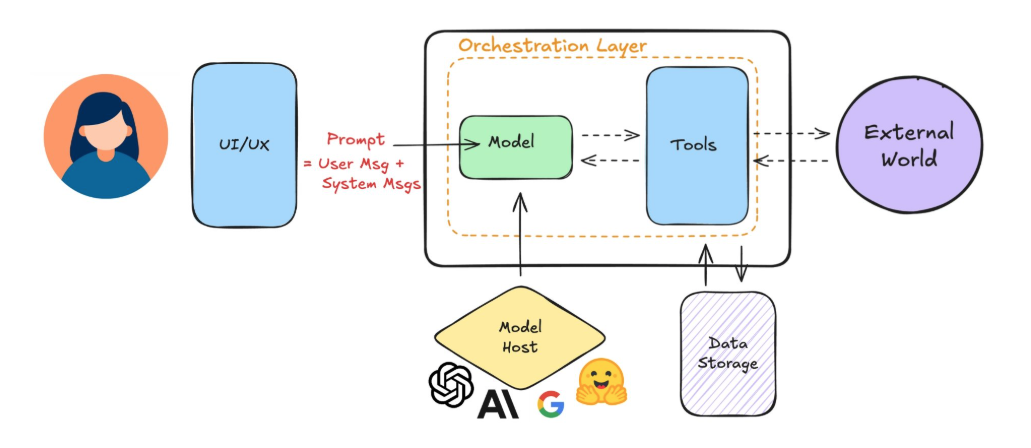

AI agents are one part of a larger system. They usually interact with users through chat boxes, dropdowns, or other input elements, while the model runs separately, often on a server.

- Users interact with agents via conversational interfaces

- Models are hosted separately and can be provider-hosted or self-hosted

- Tools and storage support actions and data collection

Keeping all parts connected ensures the agent works effectively and minimizes failures.

When to Use Agents

Agents are ideal for problems that are complex, multi-step, or open-ended. They shine when tasks require planning, tool use, and adaptability to changing conditions.

✅ Complex problems require multiple steps and tool calls

✅ Open-ended problems benefit from flexible workflows

✅ Agents adapt to evolving data and user preferences

For simple, static tasks, traditional IT solutions may be more efficient. Agents are best where adaptability and multi-step reasoning are needed.

Example:

-

Sending reminder emails

- Limited options and outcomes

- User list does not change

- Better suited for IT automation, not an agent

-

IT support for employees

- Employees ask varied questions about products or software

- Product list changes over time

- Open-ended, complex problem fits an agentic solution

Scaling AI Agents

Scaling agents means they can handle more users, varied inputs, and potential misuse while keeping performance high. There are three pillars which ensures agent remains stable and responsive even as usage grows

- Robust infrastructure and tooling

- Modular design architecture

- Continuous evaluation and feedback loops

Robust Infrastructure and Tooling

Solid infrastructure prevents bottlenecks and supports consistent performance during scaling.

- Compute handles running agent processes

- Storage logs states, chats, and system activity

- Deployment pipelines and tools reduce errors and streamline updates

In addition to compute and storage, we also need reliable deployment pipelines and agent tooling to make updates safe and system operations smooth.

Modular Design Architecture

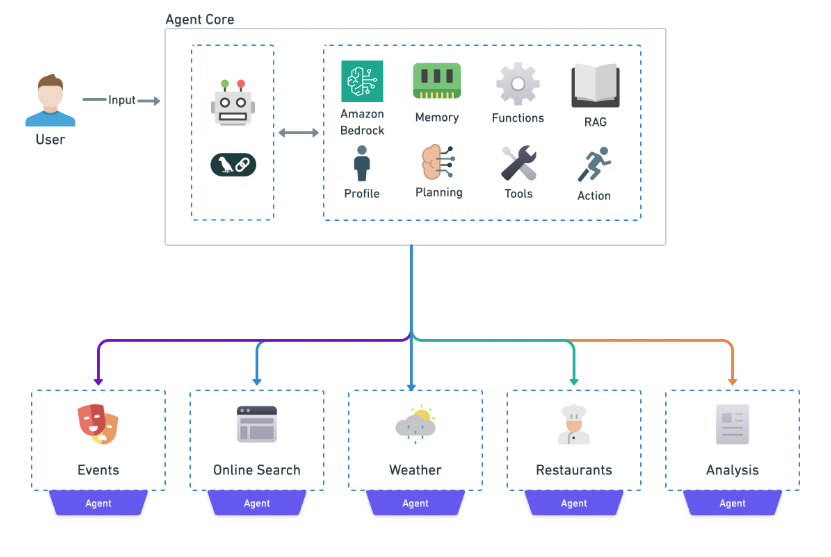

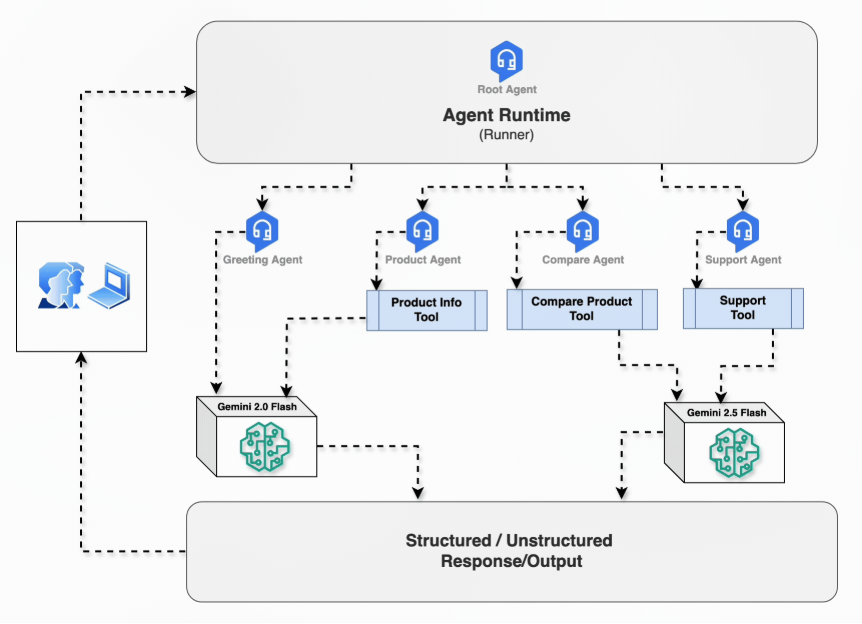

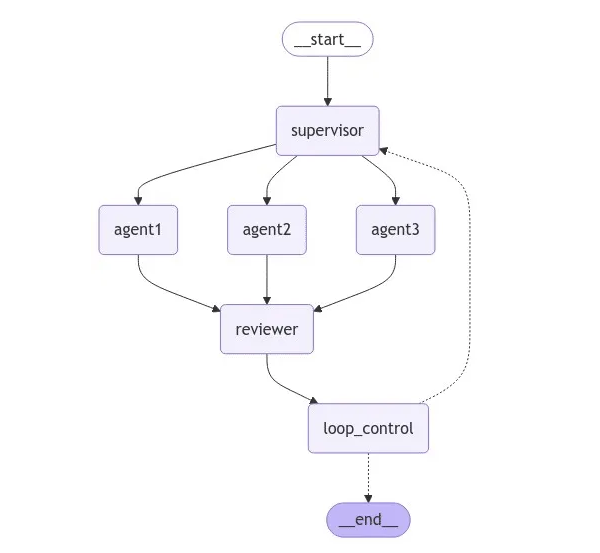

Modular design separates components so they can be developed, updated, and maintained independently.

- Software components like UI, agents, and databases are independent

- Multi-agent systems assign specialized roles to each agent

- Updates to one part do not affect the whole system

Agents themselves can be modular, with each handling specific tasks, which allows complex systems to evolve without breaking other parts.

Example: Customer Support Multi-Agent

In a support system, one agent retrieves information while another responds in a defined tone.

- Retrieval agent handles data gathering

- Response agent communicates with users

- Agents operate in defined domains

Continuous Evaluation/Feedback Loops

Continuous monitoring helps detect issues early and improve performance over time. Track key metrics such as:

- Success rates

- Error rates

- Latency

In addition, collecting user feedback helps refine prompts, improve tool selection, and enhance data quality. Together, monitoring and feedback ensure the agent becomes more reliable and effective as it scales.

Common Failure Modes

Agents often work well in testing, but real users expose weaknesses. As usage grows, inputs become messy, expectations expand, and performance and cost pressures increase. Knowing common failure modes helps you design agents that stay reliable at scale.

- Fragile evaluation

- Intent drift

- Undesirable feedback loops

- Latency bottlenecks

- Cost explosion

Each of these issues appears during growth, and each can be addressed with better design choices.

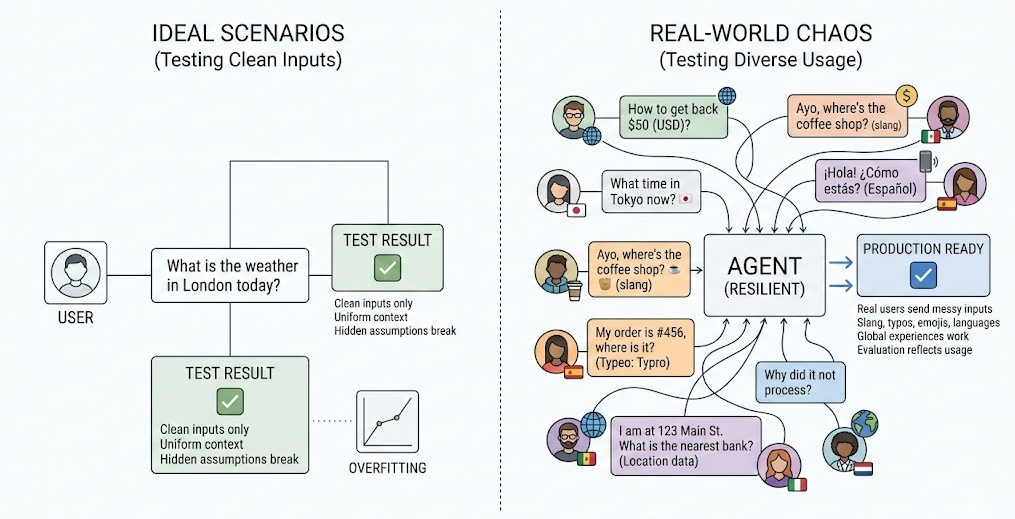

Fragile Evaluation

Agents often fail because they were tested only on clean, ideal examples.

In reality:

- Real users send messy input

- Users use slang, typos, emojis, and different languages

- Hidden assumptions break global experiences

Instead of testing only perfect prompts, use real and diverse queries. Simulate different countries, currencies, time zones, and writing styles. Evaluation should reflect real usage, not ideal scenarios. This makes the agent resilient in production.

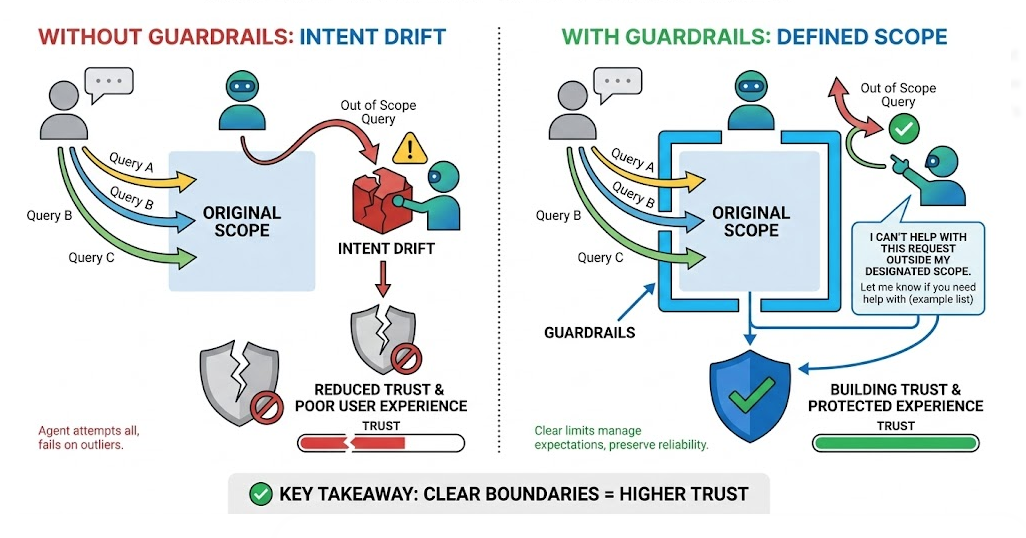

Intent Drift

Users will ask for things the agent was never designed to handle.

- Users go outside the original scope

- Agent tries to answer anyway

- Poor answers reduce trust

To counter intent drifts, define clear boundaries. If a request is out of scope, the agent should say so clearly and politely. Honest limits build more trust than incorrect answers. Guardrails protect both the system and the user experience.

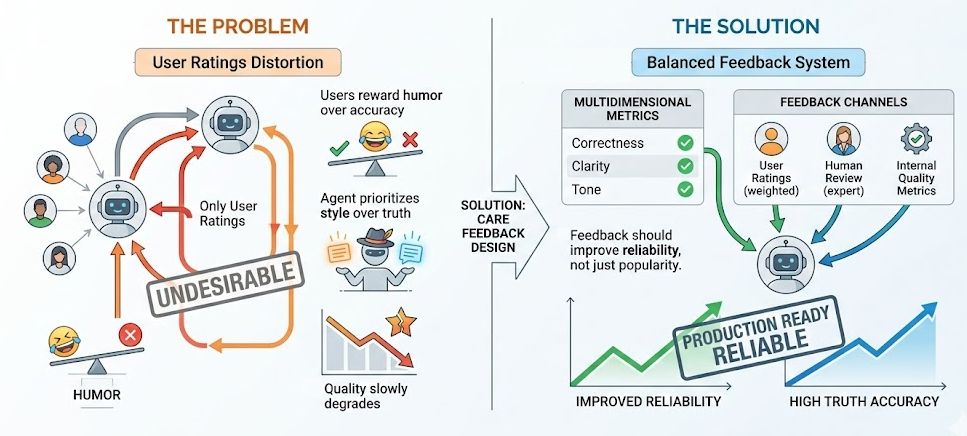

Undesirable Feedback Loops

Optimizing only for user ratings can distort behavior.

- Users reward humor over accuracy

- Agent prioritizes style over truth

- Quality slowly degrades

As a solution, you need to design feedback systems carefully. Combine user ratings with human review and clear metrics like correctness, clarity, and tone. Feedback should improve reliability, not just popularity.

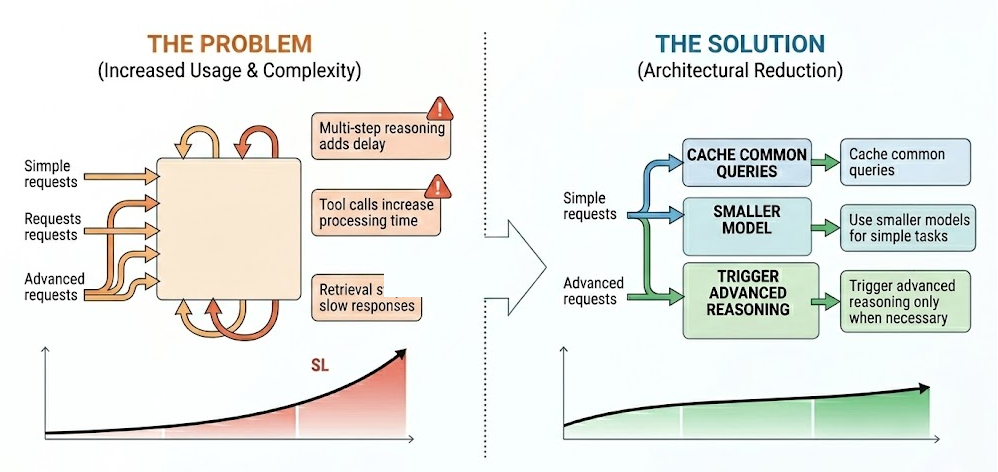

Latency Bottlenecks

As usage increases, response time becomes critical.

- Multi-step reasoning adds delay

- Tool calls increase processing time

- Retrieval steps slow responses

The solution is to reduce latency with architectural decisions:

- Cache common queries

- Use smaller models for simple tasks

- Trigger advanced reasoning only when necessary

Performance should be designed intentionally, not added later. Fast systems feel smarter and more reliable.

Cost Explosion

Scaling multiplies every model call and tool request.

- Long prompts increase token usage

- Multiple tools raise API costs

- Advanced models are expensive

To reduce costs, design with cost awareness from the start:

- Cache repeated results

- Use smaller models when possible

- Remove unnecessary retrieval steps

- Add fair usage limits for expensive features

Most cost savings come from architecture decisions, not small optimizations. A scalable agent is not only accurate and fast, but also cost-efficient.